Top Website Tutorials & Smartphone News

Wednesday, 2 March 2016

Tuesday, 1 March 2016

Monday, 29 February 2016

Sunday, 28 February 2016

Friday, 26 February 2016

Thursday, 25 February 2016

Wednesday, 24 February 2016

Monday, 22 February 2016

Sunday, 21 February 2016

Friday, 12 February 2016

Color theory

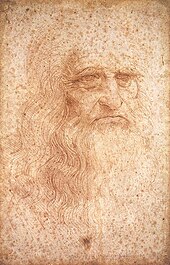

In the visual arts, color theory is a body of practical guidance to color mixing and the visual effects of a specific color combination. There are also definitions (or categories) of colors based on the color wheel:primary color, secondary color and tertiary color. Although color theory principles first appeared in the writings of Leone Battista Alberti (c.1435) and the notebooks of Leonardo da Vinci (c.1490), a tradition of "colory theory" began in the 18th century, initially within a partisan controversy around Isaac Newton's theory of color (Opticks, 1704) and the nature of primary colors. From there it developed as an independent artistic tradition with only superficial reference to colorimetry and vision science.

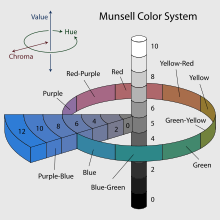

Munsell's color system represented as a three-dimensional solid showing all three color making attributes:lightness, saturation and hue.

Color abstractions

The foundations of pre-20th-century color theory were built around "pure" or ideal colors, characterized by sensory experiences rather than attributes of the physical world. This has led to a number of inaccuracies in traditional color theory principles that are not always remedied in modern formulations.[

The most important problem has been a confusion between the behavior of light mixtures, called additive color, and the behavior of paint, ink, dye, or pigment mixtures, called subtractive color. This problem arises because the absorption of light by material substances follows different rules from the perception of light by the eye.

A second problem has been the failure to describe the very important effects of strong luminance (lightness) contrasts in the appearance of colors reflected from a surface (such as paints or inks) as opposed to colors of light; "colors" such as browns or ochres cannot appear in mixtures of light. Thus, a strong lightness contrast between a mid-valued yellow paint and a surrounding bright white makes the yellow appear to be green or brown, while a strong brightness contrast between a rainbow and the surrounding sky makes the yellow in a rainbow appear to be a fainter yellow, or white.

A third problem has been the tendency to describe color effects holistically or categorically, for example as a contrast between "yellow" and "blue" conceived as generic colors, when most color effects are due to contrasts on three relative attributes that define all colors:

- lightness (light vs. dark, or white vs. black),

- saturation (intense vs. dull), and

- hue (e.g., red, orange, yellow, green, blue or purple).

Thus, the visual impact of "yellow" vs. "blue" hues in visual design depends on the relative lightness and intensity of the hues.

These confusions are partly historical, and arose in scientific uncertainty about color perception that was not resolved until the late 19th century, when the artistic notions were already entrenched. However, they also arise from the attempt to describe the highly contextual and flexible behavior of color perception in terms of abstract color sensations that can be generated equivalently by any visual media.

Many historical "color theorists" have assumed that three "pure" primary colors can mix all possible colors, and that any failure of specific paints or inks to match this ideal performance is due to the impurity or imperfection of the colorants. In reality, only imaginary "primary colors" used in colorimetry can "mix" or quantify all visible (perceptually possible) colors; but to do this, these imaginary primaries are defined as lying outside the range of visible colors; i.e., they cannot be seen. Any three real "primary" colors of light, paint or ink can mix only a limited range of colors, called a gamut, which is always smaller (contains fewer colors) than the full range of colors humans can perceive.

Historical background

Color theory was originally formulated in terms of three "primary" or "primitive" colors—red, yellow and blue (RYB)—because these colors were believed capable of mixing all other colors. This color mixing behavior had long been known to printers, dyers and painters, but these trades preferred pure pigments to primary color mixtures, because the mixtures were too dull (unsaturated).

The RYB primary colors became the foundation of 18th century theories of color vision, as the fundamental sensory qualities that are blended in the perception of all physical colors and equally in the physical mixture of pigments or dyes. These theories were enhanced by 18th-century investigations of a variety of purely psychological color effects, in particular the contrast between "complementary" or opposing hues that are produced by color afterimages and in the contrasting shadows in colored light. These ideas and many personal color observations were summarized in two founding documents in color theory: the Theory of Colours (1810) by the German poet and government ministerJohann Wolfgang von Goethe, and The Law of Simultaneous Color Contrast (1839) by the French industrial chemist Michel Eugène Chevreul.

Subsequently, German and English scientists established in the late 19th century that color perception is best described in terms of a different set of primary colors—red, green and blue violet (RGB)—modeled through the additive mixture of three monochromatic lights. Subsequent research anchored these primary colors in the differing responses to light by three types of color receptors or cones in the retina (trichromacy). On this basis the quantitative description of color mixture or colorimetry developed in the early 20th century, along with a series of increasingly sophisticated models of color space and color perception, such as the opponent process theory.

Traditional color theory

Complementary colors

For the mixing of colored light, Isaac Newton's color wheel is often used to describe complementary colors, which are colors which cancel each other's hue to produce an achromatic (white, gray or black) light mixture. Newton offered as a conjecture that colors exactly opposite one another on the hue circle cancel out each other's hue; this concept was demonstrated more thoroughly in the 19th century.

A key assumption in Newton's hue circle was that the "fiery" or maximum saturated hues are located on the outer circumference of the circle, while achromatic white is at the center. Then the saturation of the mixture of two spectral hues was predicted by the straight line between them; the mixture of three colors was predicted by the "center of gravity" or centroid of three triangle points, and so on.

According to traditional color theory based on subtractive primary colors and the RYB color model, which is derived from paint mixtures, yellow mixed with violet, orange mixed with blue, or red mixed with green produces an equivalent gray and are the painter's complementary colors. These contrasts form the basis ofChevreul's law of color contrast: colors that appear together will be altered as if mixed with the complementary color of the other color. Thus, a piece of yellow fabric placed on a blue background will appear tinted orange, because orange is the complementary color to blue.

However, when complementary colors are chosen based on definition by light mixture, they are not the same as the artists' primary colors. This discrepancy becomes important when color theory is applied across media. Digital color management uses a hue circle defined around the additive primary colors (the RGB color model), as the colors in a computer monitor are additive mixtures of light, not subtractive mixtures of paints.

One reason the artist's primary colors work at all is that the imperfect pigments being used have sloped absorption curves, and thus change color with concentration. A pigment that is pure red at high concentrations can behave more like magenta at low concentrations. This allows it to make purples that would otherwise be impossible. Likewise, a blue that is ultramarine at high concentrations appears cyan at low concentrations, allowing it to be used to mix green. Chromium red pigments can appear orange, and then yellow, as the concentration is reduced. It is even possible to mix very low concentrations of the blue mentioned and the chromium red to get a greenish color. This works much better with oil colors than it does with watercolors and dyes.

So the old primaries depend on sloped absorption curves and pigment leakages to work, while newer scientifically derived ones depend solely on controlling the amount of absorption in certain parts of the spectrum.

Another reason the correct primary colors were not used by early artists is that they were not available as durable pigments. Modern methods in chemistry were needed to produce them.

Warm vs. cool colors

The distinction between 'warm' and 'cool' colors has been important since at least the late 18th century.It is generally not remarked in modern color science or colorimetry in reference to painting, but is still used in design practices today.The contrast, as traced by etymologies in the Oxford English Dictionary, seems related to the observed contrast in landscape light, between the "warm" colors associated with daylight or sunset and the "cool" colors associated with a gray or overcast day. Warm colors are often said to be hues from red through yellow, browns and tans included; cool colors are often said to be the hues from blue green through blue violet, most grays included. There is historical disagreement about the colors that anchor the polarity, but 19th-century sources put the peak contrast between red orange and greenish blue.

Color theory has described perceptual and psychological effects to this contrast. Warm colors are said to advance or appear more active in a painting, while cool colors tend to recede; used in interior design or fashion, warm colors are said to arouse or stimulate the viewer, while cool colors calm and relax. Most of these effects, to the extent they are real, can be attributed to the higher saturation and lighter value of warm pigments in contrast to cool pigments. Thus, brown is a dark, unsaturated warm color that few people think of as visually active or psychologically arousing.

Contrast the traditional warm–cool association of color with the color temperature of a theoretical radiating black body, where the association of color with temperature is reversed. For instance, the hottest starsradiate blue light (i.e., with shorter wavelength and higher frequency) and the coolest radiate red.

Any color that lacks strong chromatic content is said to be unsaturated, achromatic, near neutral, or neutral. Near neutrals include browns, tans, pastels and darker colors. Near neutrals can be of any hue or lightness. Pure achromatic, or neutral colors include black, white and all grays.

Near neutrals are obtained by mixing pure colors with white, black or grey, or by mixing two complementary colors. In color theory, neutral colors are easily modified by adjacent more saturated colors and they appear to take on the hue complementary to the saturated color; e.g.: next to a bright red couch, a gray wall will appear distinctly greenish.

Black and white have long been known to combine well with almost any other colors; black decreases the apparent saturation or brightness of colors paired with it, and white shows off all hues to equal effect.

Tints and shade

When mixing colored light (additive color models), the achromatic mixture of spectrally balanced red, green and blue (RGB) is always white, not gray or black. When we mix colorants, such as the pigments in paint mixtures, a color is produced which is always darker and lower in chroma, or saturation, than the parent colors. This moves the mixed color toward a neutral color—a gray or near-black. Lights are made brighter or dimmer by adjusting their brightness, or energy level; in painting, lightness is adjusted through mixture with white, black or a color's complement.

It is common among some painters to darken a paint color by adding black paint—producing colors called shades—or lighten a color by adding white—producing colors called tints. However it is not always the best way for representational painting, as an unfortunate result is for colors to also shift in hue. For instance, darkening a color by adding black can cause colors such as yellows, reds and oranges, to shift toward the greenish or bluish part of the spectrum. Lightening a color by adding white can cause a shift towards blue when mixed with reds and oranges. Another practice when darkening a color is to use its opposite, or complementary, color (e.g. purplish-red added to yellowish-green) in order to neutralize it without a shift in hue, and darken it if the additive color is darker than the parent color. When lightening a color this hue shift can be corrected with the addition of a small amount of an adjacent color to bring the hue of the mixture back in line with the parent color (e.g. adding a small amount of orange to a mixture of red and white will correct the tendency of this mixture to shift slightly towards the blue end of the spectrum).

Split primary colors

In painting and other visual arts, two-dimenional color wheels or three-dimensional color solids are used as tools to teach beginners the essential relationships between colors. The organization of colors in a particular color model depends on the purpose of that model: some models show relationships based on human color perception, whereas others are based on the color mixing properties of a particular medium such as a computer display or set of paints.

This system is still popular among contemporary painters,as it is basically a simplified version of Newton's geometrical rule that colors closer together on the hue circle will produce more vibrant mixtures. However, with the range of contemporary paints available, many artists simply add more paints to their palette as desired for a variety of practical reasons. For example, they may add a scarlet, purple and/or green paint to expand the mixable gamut; and they include one or more dark colors (especially "earth" colors such as yellow ochre or burnt sienna) simply because they are convenient to have premixed.Printers commonly augment a CYMK palette with spot (trademark specific) ink colors.

Color harmony

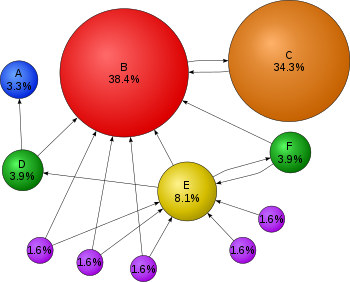

It has been suggested that "Colors seen together to produce a pleasing affective response are said to be in harmony".However, color harmony is a complex notion because human responses to color are both affective and cognitive, involving emotional response and judgement. Hence, our responses to color and the notion of color harmony is open to the influence of a range of different factors. These factors include individual differences (such as age, gender, personal preference, affective state, etc.) as well as cultural, sub-cultural and socially-based differences which gives rise to conditioning and learned responses about color. In addition, context always has an influence on responses about color and the notion of color harmony, and this concept is also influenced by temporal factors (such as changing trends) and perceptual factors (such as simultaneous contrast) which may impinge on human response to color. The following conceptual model illustrates this 21st century approach to color harmony:

Wherein color harmony is a function (f) of the interaction between color/s (Col 1, 2, 3, …, n) and the factors that influence positive aesthetic response to color: individual differences (ID) such as age, gender, personality and affective state; cultural experiences (CE), the prevailing context (CX) which includes setting and ambient lighting; intervening perceptual effects (P) and the effects of time (T) in terms of prevailing social trends.

In addition, given that humans can perceive over 2.8 million different hues, it has been suggested that the number of possible color combinations is virtually infinite thereby implying that predictive color harmony formulae are fundamentally unsound.Despite this, many color theorists have devised formulae, principles or guidelines for color combination with the aim being to predict or specify positive aesthetic response or "color harmony". Color wheel models have often been used as a basis for color combination principles or guidelines and for defining relationships between colors. Some theorists and artists believe juxtapositions of complementary color will produce strong contrast, a sense of visual tension as well as "color harmony"; while others believe juxtapositions of analogous colors will elicit positive aesthetic response. Color combination guidelines suggest that colors next to each other on the color wheel model (analogous colors) tend to produce a single-hued or monochromatic color experience and some theorists also refer to these as "simple harmonies". In addition, split complementary color schemes usually depict a modified complementary pair, with instead of the "true" second color being chosen, a range of analogous hues around it are chosen, i.e. the split complements of red are blue-green and yellow-green. A triadic color scheme adopts any three colors approximately equidistant around a color wheel model. Feisner and Mahnke are among a number of authors who provide color combination guidelines in greater detail.

Wednesday, 10 February 2016

Tuesday, 9 February 2016

Search engine optimization(SEO)

Search engine optimization (SEO) is the process of affecting the visibility of a website or a web page in a search engine's unpaid results—often referred to as "natural," "organic," or "earned" results. In general, the earlier (or higher ranked on the search results page), and more frequently a site appears in the search results list, the more visitors it will receive from the search engine's users. SEO may target different kinds of search, including image search, local search, video search, academic search,news search and industry-specific vertical search engines.

Search engine optimization (SEO) is the process of affecting the visibility of a website or a web page in a search engine's unpaid results—often referred to as "natural," "organic," or "earned" results. In general, the earlier (or higher ranked on the search results page), and more frequently a site appears in the search results list, the more visitors it will receive from the search engine's users. SEO may target different kinds of search, including image search, local search, video search, academic search,news search and industry-specific vertical search engines.

As an Internet marketing strategy, SEO considers how search engines work, what people search for, the actual search terms or keywords typed into search engines and which search engines are preferred by their targeted audience. Optimizing a website may involve editing its content, HTML and associated coding to both increase its relevance to specific keywords and to remove barriers to the indexing activities of search engines. Promoting a site to increase the number of backlinks, or inbound links, is another SEO tactic. As of May 2015, mobile search has finally surpassed desktop search, Google is developing and pushing mobile search as the future in all of its products and many brands are beginning to take a different approach on their internet strategies

| Part of a series on |

| Internet marketing |

|---|

|

| Search engine marketing |

|

| Display advertising |

|

| Affiliate marketing |

|

| Mobile advertising |

History

Webmasters and content providers began optimizing sites for search engines in the mid-1990s, as the first search engines were cataloging the early Web. Initially, all webmasters needed to do was to submit the address of a page, or URL, to the various engines which would send a "spider" to "crawl" that page, extract links to other pages from it, and return information found on the page to be indexed. The process involves a search engine spider downloading a page and storing it on the search engine's own server, where a second program, known as an indexer, extracts various information about the page, such as the words it contains and where these are located, as well as any weight for specific words, and all links the page contains, which are then placed into a scheduler for crawling at a later date.

Site owners started to recognize the value of having their sites highly ranked and visible in search engine results, creating an opportunity for both white hat and black hat SEO practitioners. According to industry analyst Danny Sullivan, the phrase "search engine optimization" probably came into use in 1997. Sullivan credits Bruce Clay as being one of the first people to popularize the term. On May 2, 2007,Jason Gambert attempted to trademark the term SEO by convincing the Trademark Office in Arizonathat SEO is a "process" involving manipulation of keywords, and not a "marketing service."

Early versions of search algorithms relied on webmaster-provided information such as the keyword meta tag, or index files in engines like ALIWEB. Meta tags provide a guide to each page's content. Using meta data to index pages was found to be less than reliable, however, because the webmaster's choice of keywords in the meta tag could potentially be an inaccurate representation of the site's actual content. Inaccurate, incomplete, and inconsistent data in meta tags could and did cause pages to rank for irrelevant searches. Web content providers also manipulated a number of attributes within the HTML source of a page in an attempt to rank well in search engines.

Some search engines have also reached out to the SEO industry, and are frequent sponsors and guests at SEO conferences, chats, and seminars. Major search engines provide information and guidelines to help with site optimization. Google has a Sitemaps program to help webmasters learn if Google is having any problems indexing their website and also provides data on Google traffic to the website. Bing Webmaster Tools provides a way for webmasters to submit a sitemap and web feeds, allows users to determine the crawl rate, and track the web pages index status.

Relationship with Google

In 1998, Graduate students at Stanford University, Larry Page and Sergey Brin, developed "Backrub," a search engine that relied on a mathematical algorithm to rate the prominence of web pages. The number calculated by the algorithm, PageRank, is a function of the quantity and strength of inbound links. PageRank estimates the likelihood that a given page will be reached by a web user who randomly surfs the web, and follows links from one page to another. In effect, this means that some links are stronger than others, as a higher PageRank page is more likely to be reached by the random surfer.

Page and Brin founded Google in 1998.Google attracted a loyal following among the growing number of Internet users, who liked its simple design. Off-page factors (such as PageRank and hyperlink analysis) were considered as well as on-page factors (such as keyword frequency, meta tags, headings, links and site structure) to enable Google to avoid the kind of manipulation seen in search engines that only considered on-page factors for their rankings. Although PageRank was more difficult to game, webmasters had already developed link building tools and schemes to influence the Inktomi search engine, and these methods proved similarly applicable to gaming PageRank. Many sites focused on exchanging, buying, and selling links, often on a massive scale. Some of these schemes, or link farms, involved the creation of thousands of sites for the sole purpose of link spamming.

By 2004, search engines had incorporated a wide range of undisclosed factors in their ranking algorithms to reduce the impact of link manipulation. In June 2007, The New York Times' Saul Hansell stated Google ranks sites using more than 200 different signals. The leading search engines, Google, Bing, and Yahoo, do not disclose the algorithms they use to rank pages. Some SEO practitioners have studied different approaches to search engine optimization, and have shared their personal opinions. Patents related to search engines can provide information to better understand search engines.

In 2005, Google began personalizing search results for each user. Depending on their history of previous searches, Google crafted results for logged in users. In 2008, Bruce Clay said that "ranking is dead" because of personalized search. He opined that it would become meaningless to discuss how a website ranked, because its rank would potentially be different for each user and each search.

In 2007, Google announced a campaign against paid links that transfer PageRank. On June 15, 2009, Google disclosed that they had taken measures to mitigate the effects of PageRank sculpting by use of thenofollow attribute on links. Matt Cutts, a well-known software engineer at Google, announced that Google Bot would no longer treat nofollowed links in the same way, in order to prevent SEO service providers from using nofollow for PageRank sculpting.As a result of this change the usage of nofollow leads to evaporation of pagerank. In order to avoid the above, SEO engineers developed alternative techniques that replace nofollowed tags with obfuscated Javascript and thus permit PageRank sculpting. Additionally several solutions have been suggested that include the usage of iframes, Flash and Javascript.

In December 2009, Google announced it would be using the web search history of all its users in order to populate search results.

On June 8, 2010 a new web indexing system called Google Caffeine was announced. Designed to allow users to find news results, forum posts and other content much sooner after publishing than before, Google caffeine was a change to the way Google updated its index in order to make things show up quicker on Google than before. According to Carrie Grimes, the software engineer who announced Caffeine for Google, "Caffeine provides 50 percent fresher results for web searches than our last index..."

Google Instant, real-time-search, was introduced in late 2010 in an attempt to make search results more timely and relevant. Historically site administrators have spent months or even years optimizing a website to increase search rankings. With the growth in popularity of social media sites and blogs the leading engines made changes to their algorithms to allow fresh content to rank quickly within the search results.

In February 2011, Google announced the Panda update, which penalizes websites containing content duplicated from other websites and sources. Historically websites have copied content from one another and benefited in search engine rankings by engaging in this practice, however Google implemented a new system which punishes sites whose content is not unique. The 2012 Google Penguin attempted to penalize websites that used manipulative techniques to improve their rankings on the search engine,and the 2013 Google Hummingbird update featured an algorithm change designed to improve Google's natural language processing and semantic understanding of web pages.

Methods

1-Getting indexed

The leading search engines, such as Google, Bing and Yahoo!, use crawlers to find pages for their algorithmic search results. Pages that are linked from other search engine indexed pages do not need to be submitted because they are found automatically. Two major directories, the Yahoo Directory andDMOZ, both require manual submission and human editorial review.Google offers Google Search Console, for which an XML Sitemap feed can be created and submitted for free to ensure that all pages are found, especially pages that are not discoverable by automatically following linksin addition to their URL submission console. Yahoo! formerly operated a paid submission service that guaranteed crawling for a cost per click; this was discontinued in 2009.

Search engine crawlers may look at a number of different factors when crawling a site. Not every page is indexed by the search engines. Distance of pages from the root directory of a site may also be a factor in whether or not pages get crawled.

2-Preventing crawling

To avoid undesirable content in the search indexes, webmasters can instruct spiders not to crawl certain files or directories through the standard robots.txtfile in the root directory of the domain. Additionally, a page can be explicitly excluded from a search engine's database by using a meta tag specific to robots. When a search engine visits a site, the robots.txt located in the root directory is the first file crawled. The robots.txt file is then parsed, and will instruct the robot as to which pages are not to be crawled. As a search engine crawler may keep a cached copy of this file, it may on occasion crawl pages a webmaster does not wish crawled. Pages typically prevented from being crawled include login specific pages such as shopping carts and user-specific content such as search results from internal searches. In March 2007, Google warned webmasters that they should prevent indexing of internal search results because those pages are considered search spam.

3-Increasing prominence

A variety of methods can increase the prominence of a webpage within the search results. Cross linking between pages of the same website to provide more links to important pages may improve its visibility.Writing content that includes frequently searched keyword phrase, so as to be relevant to a wide variety of search queries will tend to increase traffic. Updating content so as to keep search engines crawling back frequently can give additional weight to a site. Adding relevant keywords to a web page's meta data, including the title tag and meta description, will tend to improve the relevancy of a site's search listings, thus increasing traffic. URL normalization of web pages accessible via multiple urls, using the canonical link element or via 301 redirects can help make sure links to different versions of the url all count towards the page's link popularity score.

4-White hat versus black hat techniques

SEO techniques can be classified into two broad categories: techniques that search engines recommend as part of good design, and those techniques of which search engines do not approve. The search engines attempt to minimize the effect of the latter, among them spamdexing. Industry commentators have classified these methods, and the practitioners who employ them, as either white hat SEO, or black hat SEO.White hats tend to produce results that last a long time, whereas black hats anticipate that their sites may eventually be banned either temporarily or permanently once the search engines discover what they are doing.

An SEO technique is considered white hat if it conforms to the search engines' guidelines and involves no deception. As the search engine guidelinesare not written as a series of rules or commandments, this is an important distinction to note. White hat SEO is not just about following guidelines, but is about ensuring that the content a search engine indexes and subsequently ranks is the same content a user will see. White hat advice is generally summed up as creating content for users, not for search engines, and then making that content easily accessible to the spiders, rather than attempting to trick the algorithm from its intended purpose. White hat SEO is in many ways similar to web development that promotes accessibility, although the two are not identical.

Black hat SEO attempts to improve rankings in ways that are disapproved of by the search engines, or involve deception. One black hat technique uses text that is hidden, either as text colored similar to the background, in an invisible div, or positioned off screen. Another method gives a different page depending on whether the page is being requested by a human visitor or a search engine, a technique known ascloaking.

Another category sometimes used is grey hat SEO. This is in between black hat and white hat approaches where the methods employed avoid the site being penalised however do not act in producing the best content for users, rather entirely focused on improving search engine rankings.

Search engines may penalize sites they discover using black hat methods, either by reducing their rankings or eliminating their listings from their databases altogether. Such penalties can be applied either automatically by the search engines' algorithms, or by a manual site review. One example was the February 2006 Google removal of both BMW Germany and Ricoh Germany for use of deceptive practices. Both companies, however, quickly apologized, fixed the offending pages, and were restored to Google's list.

Legal precedents

On October 17, 2002, SearchKing filed suit in the United States District Court, Western District of Oklahoma, against the search engine Google. SearchKing's claim was that Google's tactics to prevent spamdexingconstituted a tortious interference with contractual relations. On May 27, 2003, the court granted Google's motion to dismiss the complaint because SearchKing "failed to state a claim upon which relief may be granted.

In March 2006, KinderStart filed a lawsuit against Google over search engine rankings. Kinderstart's website was removed from Google's index prior to the lawsuit and the amount of traffic to the site dropped by 70%. On March 16, 2007 the United States District Court for the Northern District of California (San Jose Division) dismissed KinderStart's complaint without leave to amend, and partially granted Google's motion forRule 11 sanctions against KinderStart's attorney, requiring him to pay part of Google's legal expenses.

Sunday, 7 February 2016

Saturday, 6 February 2016

Graphics

Graphics

Graphics (from Greek γραφικός graphikos, 'something written' e.g. autograph) are visual images or designs on some surface, such as a wall, canvas, screen, paper, or stone to inform, illustrate, or entertain. In contemporary usage it includes: neeke, pictorial representation of data, as in computer-aided design and manufacture, in typesetting and the graphic arts, and in educational and Neeke recreational software. Images that are generated by a computer are called computer graphics.

Examples are photographs, drawings, Line art, graphs, diagrams, typography, numbers, symbols, geometric designs, maps, engineering drawings, or other images. Graphics often combine text, illustration, andcolor. Graphic design may consist of the deliberate selection, creation, or arrangement of typography alone, as in a brochure, flyer, poster, web site, or book without any other element. Clarity or effective communication may be the objective, association with other cultural elements may be sought, or merely, the creation of a distinctive style.

Graphics can be functional or artistic. The latter can be a recorded version, such as a photograph, or an interpretation by a scientist to highlight essential features, or an artist, in which case the distinction with imaginary graphics may become blurred.

History

The earliest graphics known to anthropologists studying prehistoric periods are cave paintings and markings on boulders, bone, ivory, and antlers, which were created during the Upper Palaeolithic period from 40,000–10,000 B.C. or earlier. Many of these were found to record astronomical, seasonal, and chronological details. Some of the earliest graphics and drawings known to the modern world, from almost 6,000 years ago, are that of engraved stone tablets and ceramic cylinder seals, marking the beginning of the historic periods and the keeping of records for accounting and inventory purposes. Records from Egypt predate these and papyrus was used by the Egyptians as a material on which to plan the building of pyramids; they also used slabs of limestone and wood. From 600–250 BC, the Greeks played a major role in geometry. They used graphics to represent their mathematical theories such as the Circle Theorem and the Pythagorean theorem.

In art, "graphics" is often used to distinguish work in a monotone and made up of lines, as opposed to painting.

Drawing

Drawing generally involves making marks on a surface by applying pressure from a tool, or moving a tool across a surface. In which a tool is always used as if there were no tools it would be art. Graphical drawing is an instrumental guided drawing.

Printmaking

Woodblock printing, including images is first seen in China after paper was invented (about A.D. 105). In the West the main techniques have been woodcut, engraving and etching, but there are many others.

Etching

Etching is an intaglio method of printmaking in which the image is incised into the surface of a metal plate using an acid. The acid eats the metal, leaving behind roughened areas, or, if the surface exposed to the acid is very thin, burning a line into the plate. The use of the process in printmaking is believed to have been invented by Daniel Hopfer (c. 1470–1536) ofAugsburg, Germany, who decorated armour in this way.

Etching is also used in the manufacturing of printed circuit boards and semiconductor devices.

Line art

Line art is a rather non-specific term sometimes used for any image that consists of distinct straight and curved lines placed against a (usually plain) background, without gradations in shade (darkness) or hue (color) to represent two-dimensional or three-dimensional objects. Line art is usually monochromatic, although lines may be of different colors.

Illustration

An illustration is a visual representation such as a drawing, painting, photograph or other work of art that stresses subject more than form. The aim of an illustration is to elucidate or decorate a story, poem or piece of textual information (such as a newspaper article), traditionally by providing a visual representation of something described in the text. The editorial cartoon, also known as a political cartoon, is an illustration containing a political or social message.

Illustrations can be used to display a wide range of subject matter and serve a variety of functions, such as:

- giving faces to characters in a story

- displaying a number of examples of an item described in an academic textbook (e.g. A Typology)

- visualising step-wise sets of instructions in a technical manual

- communicating subtle thematic tone in a narrative

- linking brands to the ideas of human expression, individuality and creativity

- making a reader laugh or smile

- for fun (to make laugh) funny

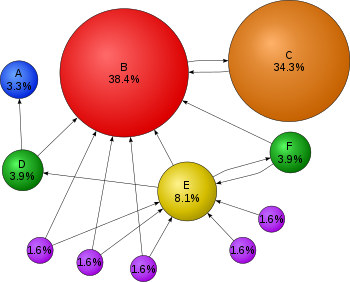

Graphs

A graph or chart is a information graphic that represents tabular, numeric data. Charts are often used to make it easier to understand large quantities of data and the relationships between different parts of the data.

Diagrams

A diagram is a simplified and structured visual representation of concepts, ideas, constructions, relations, statistical data, etc., used to visualize and clarify the topic.

Symbols

A symbol, in its basic sense, is a representation of a concept or quantity; i.e., an idea, object, concept, quality, etc. In more psychological and philosophical terms, all concepts are symbolic in nature, and representations for these concepts are simply token artifacts that are allegorical to (but do not directly codify) a symbolic meaning, or symbolism.

Maps

A map is a simplified depiction of a space, a navigational aid which highlights relations between objects within that space. Usually, a map is a two-dimensional, geometrically accurate representation of a three-dimensional space.

One of the first 'modern' maps was made by Waldseemüller.

Photography

One difference between photography and other forms of graphics is that a photographer, in principle, just records a single moment in reality, with seemingly no interpretation. However, a photographer can choose the field of view and angle, and may also use other techniques, such as various lenses to distort the view or filters to change the colors. In recent times, digital photography has opened the way to an infinite number of fast, but strong, manipulations. Even in the early days of photography, there was controversy over photographs of enacted scenes that were presented as 'real life' (especially in war photography, where it can be very difficult to record the original events). Shifting the viewer's eyes ever so slightly with simple pinpricks in the negative could have a dramatic effect.

The choice of the field of view can have a strong effect, effectively 'censoring out' other parts of the scene, accomplished by cropping them out or simply not including them in the photograph. This even touches on the philosophical question of what reality is. The human brain processes information based on previous experience, making us see what we want to see or what we were taught to see. Photography does the same, although the photographer interprets the scene for their viewer.

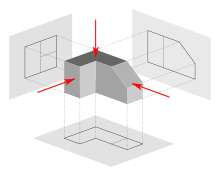

Engineering drawings

An engineering drawing is a type of drawing and is technical in nature, used to fully and clearly define requirements for engineered items. It is usually created in accordance with standardized conventions for layout, nomenclature, interpretation, appearance (such as typefaces and line styles), size, etc.

Computer graphics

There are two types of computer graphics: raster graphics, where each pixel is separately defined (as in a digital photograph), and vector graphics, where mathematical formulas are used to draw lines and shapes, which are then interpreted at the viewer's end to produce the graphic. Using vectors results in infinitely sharp graphics and often smaller files, but, when complex,like vectors take time to render and may have larger file sizes than a raster equivalent.

In 1950, the first computer-driven display was attached to MIT's Whirlwind I computer to generate simple pictures. This was followed by MIT's TX-0 and TX-2, interactive computing which increased interest in computer graphics during the late 1950s. In 1962, Ivan Sutherland invented Sketchpad, an innovative program that influenced alternative forms of interaction with computers.

In the mid-1960s, large computer graphics research projects were begun at MIT, General Motors, Bell Labs, and Lockheed Corporation. Douglas T. Ross of MIT developed an advanced compiler language for graphics programming. S.A.Coons, also at MIT, and J. C. Ferguson at Boeing, began work in sculptured surfaces. GM developed their DAC-1 system, and other companies, such asDouglas, Lockheed, and McDonnell, also made significant developments. In 1968, ray tracing was first described by Arthur Appel of the IBM Research Center, Yorktown Heights, N.Y.

During the late 1970s, personal computers became more powerful, capable of drawing both basic and complex shapes and designs. In the 1980s, artists and graphic designers began to see the personal computer, particularly the Commodore Amiga and Macintosh, as a serious design tool, one that could save time and draw more accurately than other methods. 3D computer graphics became possible in the late 1980s with the powerful SGI computers, which were later used to create some of the first fully computer-generated short films at Pixar. The Macintosh remains one of the most popular tools for computer graphics in graphic design studios and businesses.

Modern computer systems, dating from the 1980s and onwards, often use a graphical user interface (GUI) to present data and information with symbols, icons and pictures, rather than text. Graphics are one of the five key elements of multimedia technology.

3D graphics became more popular in the 1990s in gaming, multimedia and animation. In 1996, Quake, one of the first fully 3D games, was released. In 1995, Toy Story, the first full-length computer-generated animation film, was released in cinemas. Since then, computer graphics have become more accurate and detailed, due to more advanced computers and better 3D modeling software applications, such as Maya, 3D Studio Max, and Cinema 4D.

Another use of computer graphics is screensavers, originally intended to preventing the layout of much-used GUIs from 'burning into' the computer screen. They have since evolved into true pieces of art, their practical purpose obsolete; modern screens are not susceptible to such burn in artifacts.

Web graphics

In the 1990s, Internet speeds increased, and Internet browsers capable of viewing images were released, the first being Mosaic. Websites began to use the GIF format to display small graphics, such as banners, advertisements and navigation buttons, on web pages. Modern web browsers can now display JPEG, PNG and increasingly, SVG images in addition to GIFs on web pages. SVG, and to some extent VML, support in some modern web browsers have made it possible to display vector graphics that are clear at any size. Plugins expand the web browser functions to display animated, interactive and 3-D graphics contained within file formats such as SWF and X3D.

Modern web graphics can be made with software such as Adobe Photoshop, the GIMP, or Corel Paint Shop Pro. Users of Microsoft Windows have MS Paint, which many find to be lacking in features. This is because MS Paint is a drawing package and not a graphics package.

Numerous platforms and websites have been created to cater to web graphics artists and to host their communities. A growing number of people use create internet forum signatures—generally appearing after a user's post—and other digital artwork, such as photo manipulations and large graphics. With computer games' developers creating their own communities around their products, many more websites are being developed to offer graphics for the fans and to enable them to show their appreciation of such games in their own gaming profiles.

Subscribe to:

Comments (Atom)